Recap - Booking.com - Scaling our customer review system for peak traffic

How does Booking.com manage 250 million reviews from customers?

Reference:

Introduction

Business case

Booking.com’s review system is a core part of our platform.

Booking.com’s reviews maintain high authenticity, as only guests who have actually booked and stayed at a Booking.com property are invited to provide feedback.

Reviews are based on scoring, multiple-choice questions, photo uploads and textual feedback.

Backend

High availability and low latency are essential.

The backend has to handle tens of thousands of requests per second.

P99 response times less than 50 ms.

The system serves from basic lookups to more complex filters, sorters and aggregation across many accommodations.

There are 250 million reviews and each review contains:

answers to some objective questions

rating on various parameters

textual feedbacks

Storing such vast data in memory on a single machine was never a viable option. Instead, Booking.com partition data across multiple machines and maintain replicas of these partitions to safeguard against hardware or network issues.

The challenges of scaling

Core problem

Scaling challenges at Booking.com involved anticipating high traffic, particularly during the summer, as the first peak season post-pandemic approached.

Booking’s capacity tests and forecasts indicated the need for a scaling strategy to avoid service disruptions. Ensuring a seamless experience for users was paramount. Additionally, Booking aimed to accommodate future innovations, such as new product launches, which could increase system load.

Sharding

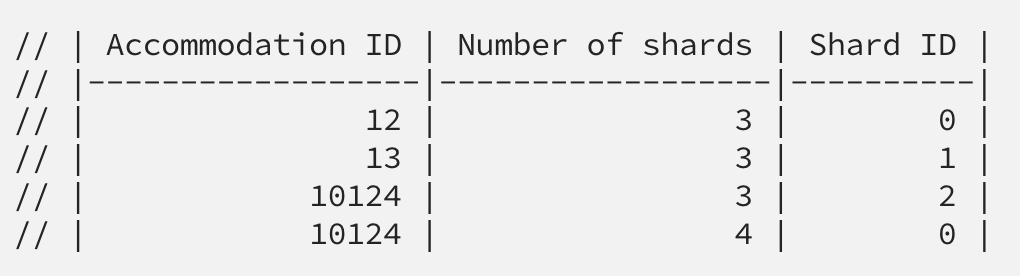

partitioning key = accommodation_id (hotel_id, property_id, etc.)

Whenever loading a review into our system, its property ID will decide in which shard it will load. Example:

ACCOMMODATION_ID % NUMBER_OF_SHARDS

Whenever a user requests reviews for a particular property ID, the routing layer will apply a hashing algorithm to resolve the shards from which to fetch the actual data.

Problem

A naive approach would be to simply add the new hardware and let the sharding do its job (resharding)

It requires a shutdown of one availability zone while the data is redistributed.

Another option was to create a separate cluster for resharding and eventually replace the current one. However, this approach was too expensive due to the additional hardware required compared to the hashing algorithm method.

We need a solution:

No need for more than the bare minimum of hardware required to scale

No noticeable negative impact on users during the upscaling

Possibility to incrementally move to the new setup and further being able to fallback at any given time during the process

Solution

Jump Consistent Hash Algorithm

To facilitate system scalability, Booking implemented a specific hashing algorithm (A Fast, Minimal Memory, Consistent Hash Algorithm by John Lamping, Eric Veach)

Limitation of “normal” Consistent Hashing by David Karger

Getting an even distribution of keys across nodes can be a challenge

We will need to have a high number of virtual nodes to get an even distribution of keys across nodes.

Higher memory requirements

The memory requirement is proportional to the (number of servers) * (number of virtual nodes per server)

Benefits of jump consistent hashing

No storage requirements.

Faster.

Even key distribution.

Even workload distribution.

// jump consistent hashing algorithm

int32_t JumpConsistentHash(uint64_t key, int32_t num_buckets) {

int64_t b = 1, j = 0;

while (j < num_buckets) {

b = j;

key = key * 2862933555777941757ULL + 1;

j = (b + 1) * (double(1LL << 31) / double((key >> 33) + 1));

}

return b;

}What’s next?

Setting up new hardware

After selecting the solution, Booking.com immediately provisioned new hardware, boosting capacity by 50% as predicted.

This new hardware adopted a sharding strategy, operating as if part of a cluster with 50% more shards.

Meanwhile, the existing shards continued to function without knowledge of the new ones, while the newly provisioned shards began populating their data.

Running both sharding mechanisms in parallel

Consistent hashing ensures a smooth transition when increasing the number of shards – keys move only from old to new shards, preventing unnecessary rearrangements.

Initially, coordinators only recognize the old scheme in an N-shard setup, routing traffic accordingly. Until the new shards have fully loaded the reassigned keys, coordinators (nodes of our routing layer) are only aware of the old scheme and route traffic only to the old shards.

At any time we still had the option to simply fall back to the old sharding scheme and completely discard the newly added hardware.

Learnings

Observability is key

We've configured alerts for critical metrics, and integrated instrumentation into applications, enabling effective performance improvements and scaling.

This observability was essential for predicting hardware needs and safely executing system scaling.

Plan in advance

Designing systems for future scalability is wise, and choosing the Jump hash sharding algorithm years ago proved beneficial. It streamlined recent upscaling efforts without adding implementation complexity, resulting in cost savings and enhanced safety throughout the process.

Have fallbacks ready

Have fallbacks ready because things can and will go wrong. It's critical to plan for failure in any critical system.

Whenever we introduce significant changes, we run the old and new systems in parallel until we can safely drop the old system, a practice that has saved us from unpleasant user experiences multiple times.